MarketLens

Why are NVIDIA H100 GPU rental prices surging by 40%

Key Takeaways

- NVIDIA's H100 GPUs have seen rental prices surge by 40% since late 2025, defying typical market trends where older chips depreciate with new releases.

- This unprecedented demand is driven by an insatiable appetite for AI compute across LLM development, enterprise adoption, and research, far outstripping current supply.

- Persistent supply chain bottlenecks, particularly in HBM3 memory and advanced CoWoS packaging, are exacerbating the shortage and are unlikely to resolve quickly.

Why are NVIDIA H100 GPU rental prices surging by 40%?

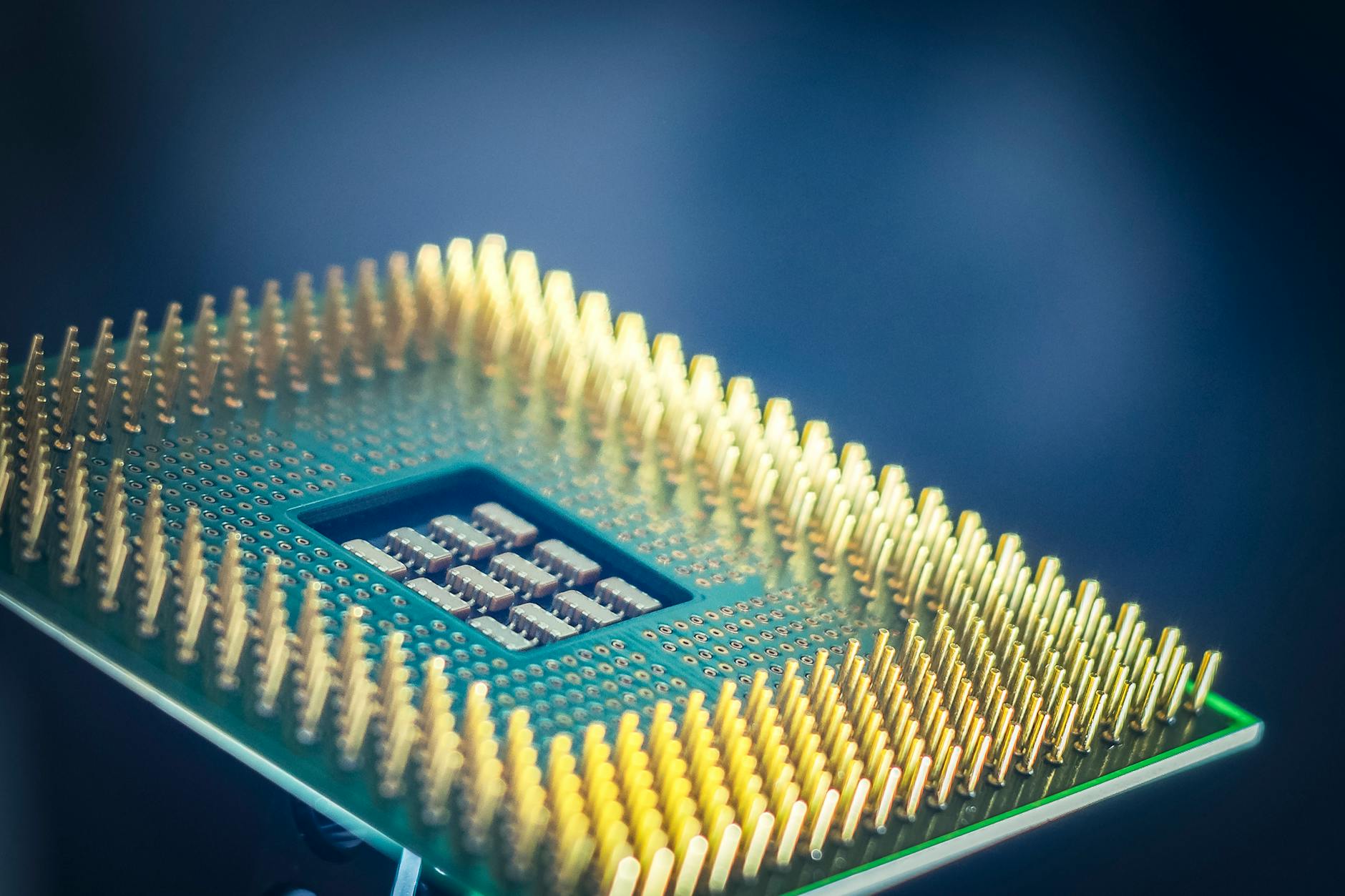

The NVIDIA H100 GPU, a cornerstone of modern AI infrastructure, has recently experienced an unusual and significant price surge in its rental market. Contrary to conventional wisdom, which dictates that older generation hardware should see price depreciation with the introduction of newer, more powerful chips like NVIDIA's Blackwell (B200/GB200) series, H100 rental costs have climbed by nearly 40% since October 2025. This dramatic increase, from a low of $1.70 per hour to approximately $2.35 per hour by March 2026 for 1-year contracts, signals a profound imbalance between supply and an insatiable demand for AI computing power.

This phenomenon isn't merely a fleeting market anomaly; it reflects a deeper, structural shift in the AI hardware landscape. The rapid adoption of open-weight models, coupled with an accelerating need for inference capabilities, has created an unprecedented wave of compute demand. Companies that have secured H100 access are holding onto it tightly, unwilling to relinquish capacity despite rising costs, making available instances incredibly scarce. This "great GPU shortage" extends beyond just the H100, with even the newer Blackwell chips facing long wait times stretching into mid-2026, indicating a systemic lack of AI computing power across the board.

The core driver is the explosive growth in AI applications, from large language model (LLM) development to enterprise AI adoption and academic research. Efficiency gains in AI models are not reducing demand; rather, they are accelerating it, as more accessible and powerful tools like chatbots and media generation proliferate. This continuous expansion of AI use cases means that the need for computational horsepower is growing exponentially, creating a persistent demand shock that current manufacturing capabilities simply cannot keep pace with.

This dynamic has transformed the GPU rental market into a highly constrained environment where price discovery is challenging and availability is paramount. The market is not behaving like a transparent commodity exchange; instead, it's characterized by scarcity economics specific to high-performance AI GPUs. For investors, this signals that the AI demand narrative is not only intact but intensifying, with the imbalance between supply and demand continuing to exert upward pressure on prices for critical hardware.

What's driving this unprecedented demand for AI compute?

The insatiable demand for AI compute, particularly for NVIDIA's H100 GPUs, stems from a confluence of factors that have converged to create a "compute crunch" unlike anything seen before. At the forefront is the relentless pace of Large Language Model (LLM) development. Firms like Anthropic have seen their Annual Recurring Revenue (ARR) nearly triple in a single quarter, soaring from $9 billion to over $25 billion, fueled by the success of models like Claude 4.6 Opus and Claude Code. This explosive growth translates directly into a need for vast computational resources for both training and inference.

Beyond the frontier models, the rapid adoption of open-weight models and the accelerating demand for inference across various applications are significant contributors. As AI tools become more sophisticated and integrated into daily operations, from chatbots to advanced analytics, the underlying compute requirements scale dramatically. Enterprise AI adoption is also a major factor, with Fortune 500 companies actively building in-house AI capabilities, driving robust demand for GPUs even as prices rise. This isn't just about training new models; it's about deploying them at scale and continuously fine-tuning them for specific tasks.

The startup ecosystem, buoyed by substantial venture capital funding, is another engine of demand. These innovative companies are constantly pushing the boundaries of AI, requiring access to cutting-edge GPUs to develop and refine their offerings. Furthermore, increased academic funding for AI research institutions contributes to the overall demand, as researchers require powerful hardware to explore new algorithms and model architectures. This broad-based demand, spanning commercial, research, and open-source initiatives, creates a powerful tailwind for NVIDIA's products.

This demand isn't just about raw compute power; it's about the specific capabilities of GPUs like the H100. Their FP8-optimized workloads and superior performance for frontier model training make them indispensable for certain tasks, creating concentrated, time-sensitive demand spikes, especially towards year-end training deadlines. The market is experiencing demand shocks tied directly to organizational planning cycles and arbitrary calendar deadlines, where everyone is trying to cross the finish line at the same time, further exacerbating the supply tightness.

What are the key supply chain bottlenecks hindering GPU availability?

The persistent shortage of NVIDIA's H100 GPUs, despite the company's efforts to ramp up production, is primarily due to critical bottlenecks within the highly specialized semiconductor supply chain. The most significant constraint lies in High Bandwidth Memory (HBM3) supply. Both SK Hynix and Samsung, key HBM3 manufacturers, are grappling with capacity limitations and low die yields, exacerbated by competing demand from other high-performance AI accelerators like AMD's MI300 series. This HBM3 bottleneck creates lead times of 60-90+ days, causing delays to cascade through the entire GPU assembly process.

Another major factor is NVIDIA's strategic prioritization of its next-generation Blackwell (B100/B200/GB200) GPUs. With Blackwell commanding higher margins and securing NVIDIA’s AI leadership for future growth, the company is shifting wafer allocation away from the mature H100 lines towards the newer architecture. This decision, driven by the scarcity of TSMC's advanced N3/N4 wafer capacity, means that while overall compute capacity is expanding, the immediate availability of H100s is being deprioritized. Hyperscalers are locking in Blackwell inventory, leaving enterprise buyers with extended queues for H100s as the focus shifts.

Advanced packaging capacity, specifically TSMC's CoWoS (chip-on-wafer-on-substrate) technology, represents another critical choke point. Despite overall advanced packaging capacity quadrupling in under two years, it remains a significant bottleneck. TrendForce projects TSMC’s CoWoS capacity to rise to around 75,000 wafers per month in 2025 and reach roughly 120,000 to 130,000 wafers per month by the end of 2026. While this growth is substantial, it is still unlikely to fully loosen current capacity constraints, as demand continues to outpace even aggressive expansion.

These structural constraints mean that lead times for data-center GPUs are stretching between 36 and 52 weeks, a stark indicator of the severe supply-demand imbalance. Memory pricing, across both DRAM and NAND, has gone parabolic, with LPDDR5 and DDR5 contract prices tracking towards 4x and 5x year-on-year increases respectively in Q1 2026. This is not a temporary disruption but a fundamental capacity constraint where demand exceeds what the system can physically produce, even when customers are willing to pay premium prices.

How does this impact NVIDIA's stock and its market position?

The persistent and surging demand for NVIDIA's H100 GPUs, coupled with the strategic shift towards Blackwell, paints a compelling picture for the company's market position and stock performance. Trading at $177.64 with a colossal market capitalization of $4.32 trillion, NVIDIA's valuation reflects its dominant role in the AI revolution. The ability to command a 40% price increase on an "older" generation chip like the H100, even as newer models are introduced, underscores the unparalleled pricing power NVIDIA wields in a market desperate for its technology. This isn't just about selling chips; it's about controlling the very infrastructure of AI.

NVIDIA's financial fundamentals are robust, reflecting this strong market position. The company boasts impressive TTM margins: Gross Margin at 71.1%, Operating Margin at 60.4%, and Net Margin at 55.6%. These figures are indicative of a business with significant competitive advantages and high profitability, driven by the high value placed on its proprietary GPU architecture and software ecosystem. Furthermore, return metrics like ROE at 104.4% demonstrate exceptional capital efficiency, translating strong earnings into shareholder value.

The growth trajectory is equally compelling. NVIDIA reported a 65.5% year-over-year revenue growth and 66.7% EPS growth for FY2026, showcasing its ability to rapidly scale its financial performance amidst surging demand. Analysts are highly bullish, with a consensus "Buy" rating from 79 analysts and a median price target of $275.00, suggesting substantial upside from current levels. This confidence is rooted in the expectation that AI demand will continue to outstrip supply for the foreseeable future, allowing NVIDIA to maintain its premium pricing and market share.

While the P/E ratio of 35.96 might appear elevated to some, it must be viewed in the context of NVIDIA's exceptional growth rates and its pivotal role in a transformative industry. The company is not just selling hardware; it's selling the foundational technology for the next era of computing. The ongoing supply constraints, while challenging for customers, paradoxically reinforce NVIDIA's indispensable status and pricing leverage, ensuring that every available chip contributes significantly to its top and bottom lines.

What are the broader implications for the AI chip market and competition?

The current dynamics of the H100 market have profound implications for the broader AI chip landscape, shaping competitive strategies and investment flows. NVIDIA's ability to maintain premium pricing and strong demand for its H100s, even with the Blackwell series on the horizon, highlights the immense barriers to entry and the power of its established ecosystem. Competitors, including AMD with its MI300 accelerators and various custom ASIC developers, face an uphill battle not just in matching performance but in securing critical supply chain resources like HBM3 and CoWoS packaging.

This environment of constrained compute is accelerating the shift towards heterogeneous compute strategies. Organizations are increasingly adopting multi-cloud approaches and exploring alternative accelerators (XPUs) like TPUs, Trainium, Gaudi, IPUs, and FPGAs to diversify risk and avoid single-vendor lock-in. While NVIDIA remains the dominant player, the scarcity is forcing innovation in software optimization, with techniques like quantization and pruning becoming more critical to maximize efficiency from available hardware. This pushes the industry to design for scarcity, treating compute as a precious resource.

The market structure is also evolving. While smaller cloud providers initially drove down H100 rental prices through aggressive competition in 2025, the current shortage has reversed this trend, with hyperscalers like AWS, Azure, and GCP charging significantly more for comparable configurations. This suggests a consolidation of power among larger players who can secure bulk allocations directly from NVIDIA. The "GPU-as-a-service" market will increasingly differentiate on developer experience, reliability, and specialized features, rather than just price.

Looking ahead, the compute crunch is a multi-year phenomenon. Supply chain experts predict stabilization around 2027, but baseline pricing is unlikely to return to pre-2024 levels. HBM pricing is expected to continue rising, and allocation rules will persist, meaning that access to high-performance AI chips will remain a strategic advantage. This environment encourages investment in new architectures and software innovations, but NVIDIA's deep integration across hardware and software, combined with its manufacturing partnerships, positions it strongly to navigate and potentially benefit from these evolving market conditions.

Is NVIDIA a buy amidst these market dynamics?

NVIDIA's current market position, characterized by unparalleled demand for its AI GPUs and robust financial performance, presents a compelling case for investors. The company's stock, trading at $177.64, reflects its leadership in a rapidly expanding and strategically vital industry. With a market cap of $4.32 trillion and a P/E ratio of 35.96, the valuation is certainly premium, but it's anchored by extraordinary growth and profitability metrics.

The consensus "Buy" rating from 79 analysts, with a median price target of $275.00, suggests significant confidence in NVIDIA's continued trajectory. This optimism is fueled by the structural nature of AI demand, which shows no signs of abating, and NVIDIA's strategic advantage in both hardware innovation and its comprehensive software ecosystem. The company's ability to command high prices for its H100s, even as newer Blackwell chips are introduced, underscores its pricing power and the inelasticity of demand for its products.

However, investors should also consider the potential for increased competition and the inherent risks of a highly concentrated supply chain. While NVIDIA is prioritizing Blackwell production, any unforeseen delays or manufacturing hiccups could impact future revenue streams. Despite these considerations, NVIDIA's role as the foundational enabler of the AI revolution, combined with its strong financials and clear growth runway, makes it a compelling long-term investment.

The current market dynamics, where demand consistently outstrips supply, create a favorable environment for NVIDIA to continue expanding its revenue and profitability. The strategic shift to Blackwell, while creating temporary H100 scarcity, positions the company for sustained leadership in the next generation of AI compute. For investors with a long-term horizon and a belief in the transformative power of AI, NVIDIA remains a core holding.

Want deeper research on any stock? Try Kavout Pro for AI-powered analysis, smart signals, and more. Already a member? Add credits to run more research.

Related Articles

Are Surging Oil Prices Grounding Travel Stocks

Are Surging Oil Prices Grounding Airline Stocks

Category

You may also like

Fastly's Compute Revenues Surge: Is AI Demand Powering Growth?

Anthropic's CFO Reveals the Compute Gamble That Could Sink Any AI Company. Here's Why Nvidia, Amazon, and Google Are All in Play

Nvidia B300 server prices in China surge amid supply crunch

Breaking News

View All →Featured Articles

Top Headlines

Strong Retail Earnings in a Tough Environment

Google Just Triggered a $1 Billion AI Price War: Does It Make Alphabet Stock a Buy Right Now?

Google 'disregard' right now if you want to see where AI overviews fall short

S&P 500 Snapshot: Eight-Week Win Streak, Longest Since 2023